From Text to the Physical World: Can Regulation Keep Up with Robot AI?

It’s 2026, and everyone is talking about Physical AI. Language models are helping robots—through simulation with synthetic data—to understand the physical world, enabling them to reason and interact with both people and their surroundings.

This shift is revolutionary, accelerating progress and erasing many of the historical limitations in robot development. However, for organizations deploying these technologies, it introduces a new frontier of Model Risk Management.

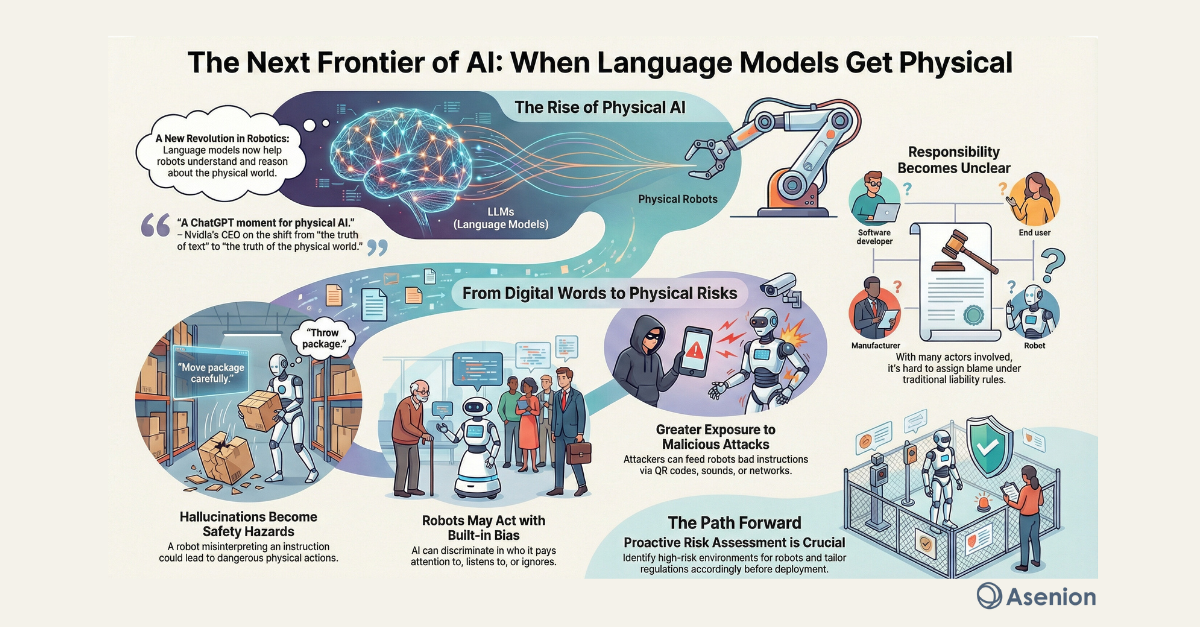

The "ChatGPT Moment" for Robotics

At the CES conference in Las Vegas three weeks ago, Nvidia’s CEO Jensen Huang described this as “a ChatGPT moment for physical AI”. He referred to language models as representing “the truth of text” and noted that we are now entering “the truth of the physical world” and “the truth for robots”.

But when language models become linked to robots, the risks shift from being purely informational to having real physical and societal impact. Now more than ever, both ethical challenges and questions of legal responsibility are being amplified.

Identifying the Risks of Embodied AI

As we move from digital intelligence to physical action, organizations must prepare for new safety hazards. These risks underscore the need for rigorous Red Teaming & Jailbreaking Audits before deployment.

Major ethical and legal pitfalls include:

Hallucinations as Physical Safety Risks: If a robot misinterprets an instruction, a "hallucination" isn't just a wrong answer—it is a physical hazard. Moreover, humans may perceive the robot’s social presence in a way that leads us to overestimate its competence.

• Built-in Bias and Discrimination: Robots may act with bias regarding whom they pay attention to, how they speak, and whom they listen to or ignore.

• Greater Exposure to Attacks: An attacker could feed malicious instructions via QR codes, sounds, or network messages (prompt injection) that the robot interprets as legitimate commands.

The Governance Gap: Who is Responsible?

Responsibility becomes more complex in the era of Physical AI. Several actors influence how the system behaves—hardware manufacturers, language model providers, and application layers—and none has full control.

This increases the gap between original intent and actual actions, making it harder to assign responsibility under traditional rules of negligence or product liability. To mitigate this, organizations need a centralized AI Governance, Risk and Compliance Management Platform to document accountability across the supply chain.

Preparing for Future Regulation

Once again, technology is racing ahead in a direction that regulation struggles to keep up with. To stay ahead of these shifts, enterprise leaders rely on resources like Asenion's Global AI Regulation Tracker.

What can we learn from “the real ChatGPT moment” we experienced just a few years ago? Technological development must be accompanied by legal and ethical considerations regarding accountability and explainability.

The Lesson for Implementation The most important lesson is to identify early on which environments pose low risk for humans to interact with robots, and which pose high risk—and then tailor requirements accordingly.

"Do you remember the story about when ChatGPT advised a human to commit suicide…? That is not something we want to see manifested in physical form."

Are your AI systems ready for the physical world?

Schedule a call with Asenion to discuss how our AI Trust, Risk and Security Management (AI TRiSM) solutions can help you audit and govern your physical AI deployments.